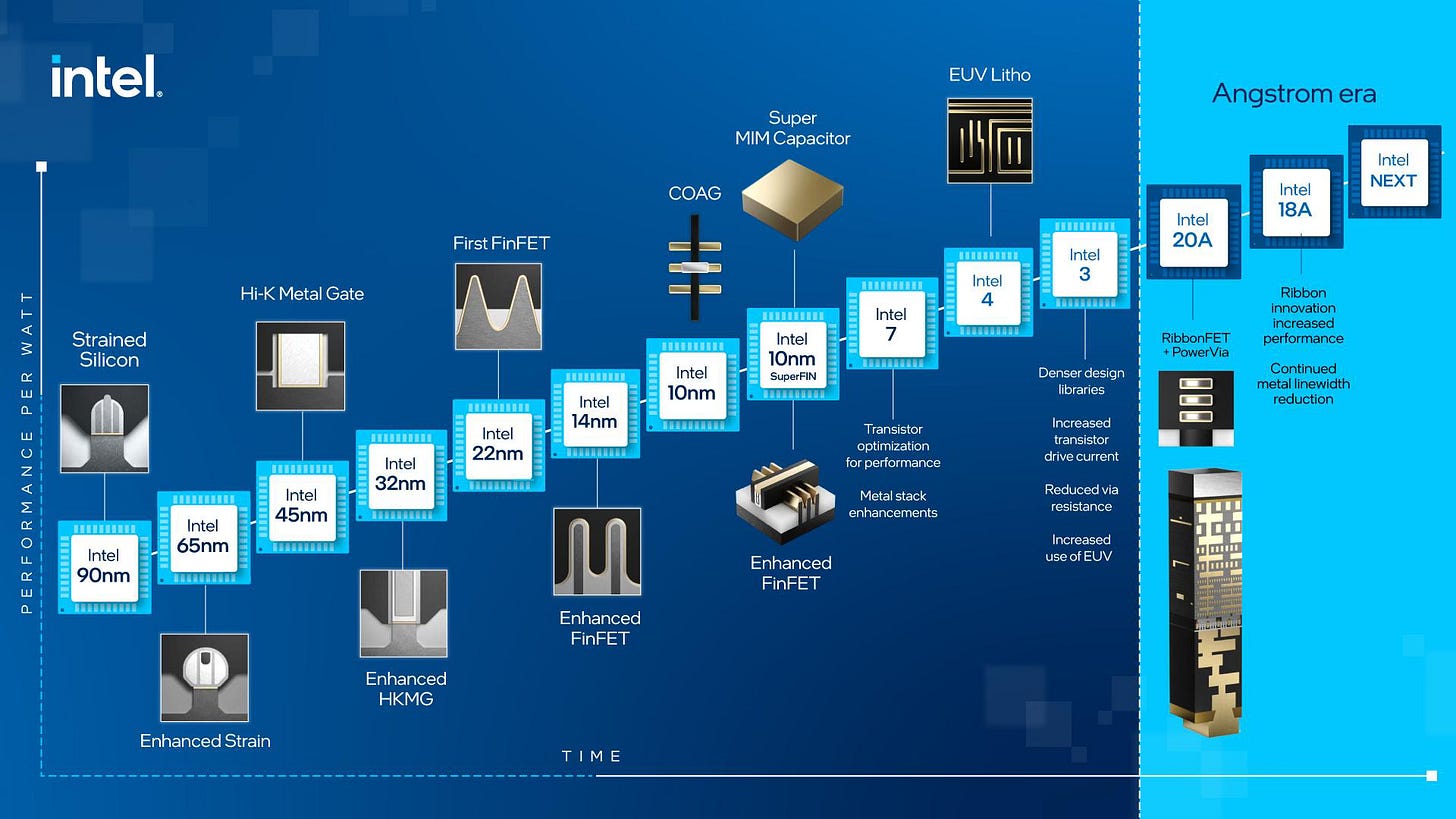

For fifty years, Moore’s Law has been as close to attaining scientific law status as any maxim in technology. Every two years, transistors got predictably smaller, and you could fit approximately double the amount onto the same piece of silicon. More transistors meant more computing power. More computing power meant better, cheaper digital systems and software capabilities. The engine of the digital economy ran on this precarious physical fact, compounding without interruption for decades.

Figure 1: The steady march of progress in transistor size.

Then the industry hit a wall.

Not a slowdown. A wall – its foundation buried deeply in thermodynamics. Transistor sizes and distances are now measured in nanometers and angstroms. It has become harder and harder to make them meaningfully smaller and closer together. The era of getting more compute just by shrinking features is, for practical purposes, over, and engineers have had to get creative to continue making gains.

For many years, Moore’s Law defined where that wall lived – transistor size and density. The resolution of lithography techniques determined how tightly transistors could be packed, constraining the total computing capability of a given chip. As the distances between transistors crept closer and closer to the theoretical limits, semiconductor engineers got clever and realized they could continue to see gains if they were to use more power and run their chips faster. By pumping more electricity through the chips, they began to generate more and more heat per unit of time. Today, this is the driving constraint – the Wall.

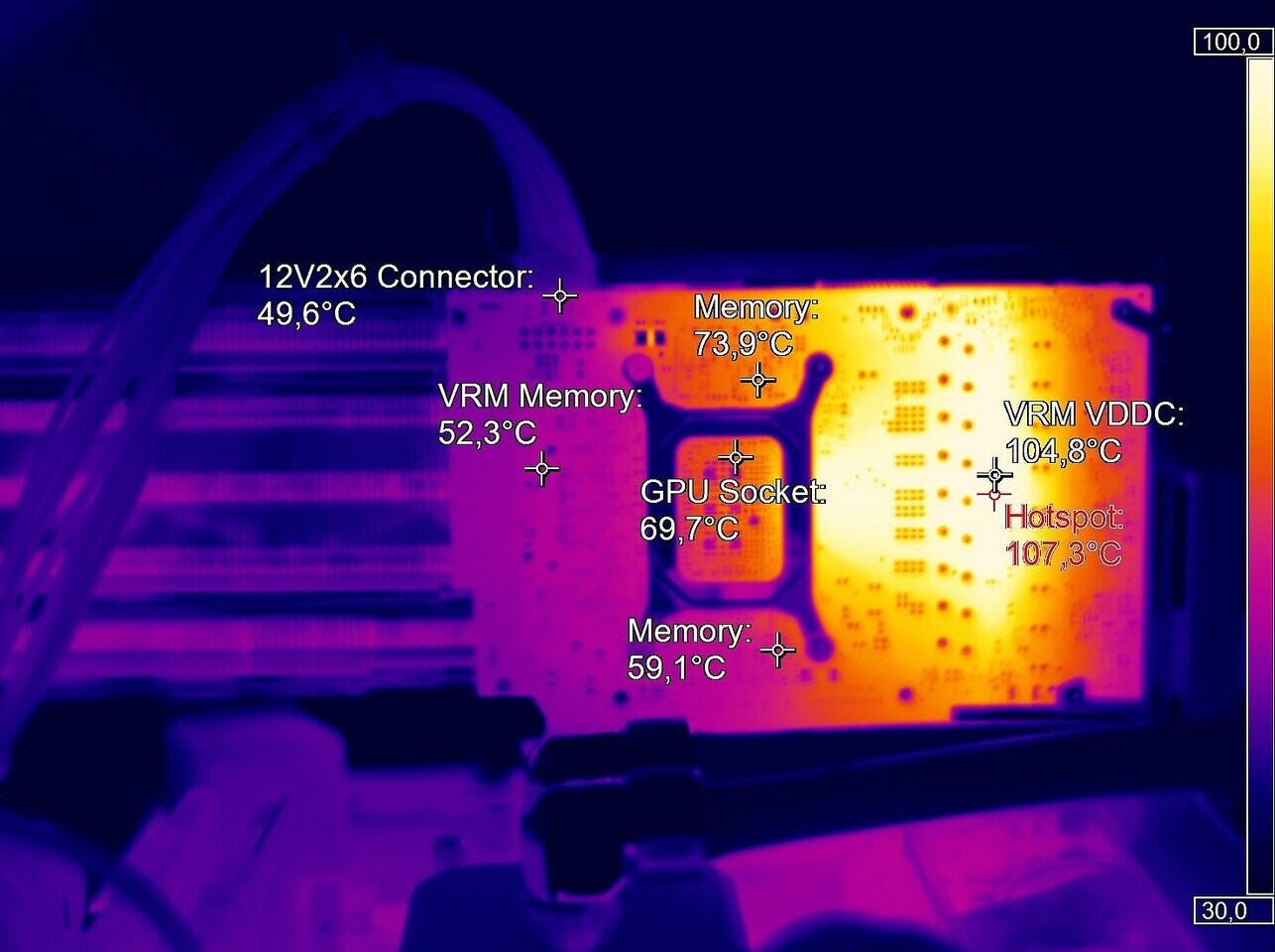

Figure 2: Thermal infrared image of an NVIDIA card under high load. Source.

The wall right now is in thermal management. Every datacenter running serious workloads is, at some fundamental level, a building full of electrical fire that needs to be continuously prevented from igniting and destroying itself. And as the race for AI domination thunders ahead, datacenter engineers and chip designers are straining to find ways to keep these larger and larger chips running at higher and higher power levels from cooking themselves to an early demise. Roughly 35% of a modern data center’s operating costs and 15% of the facility construction costs can be attributed to the cooling systems. And high in the Swiss mountains in a federal lab, two engineers looked at how the entire industry was solving this and said: “we’re attacking this from the wrong direction entirely.”

That’s where Corintis was born.

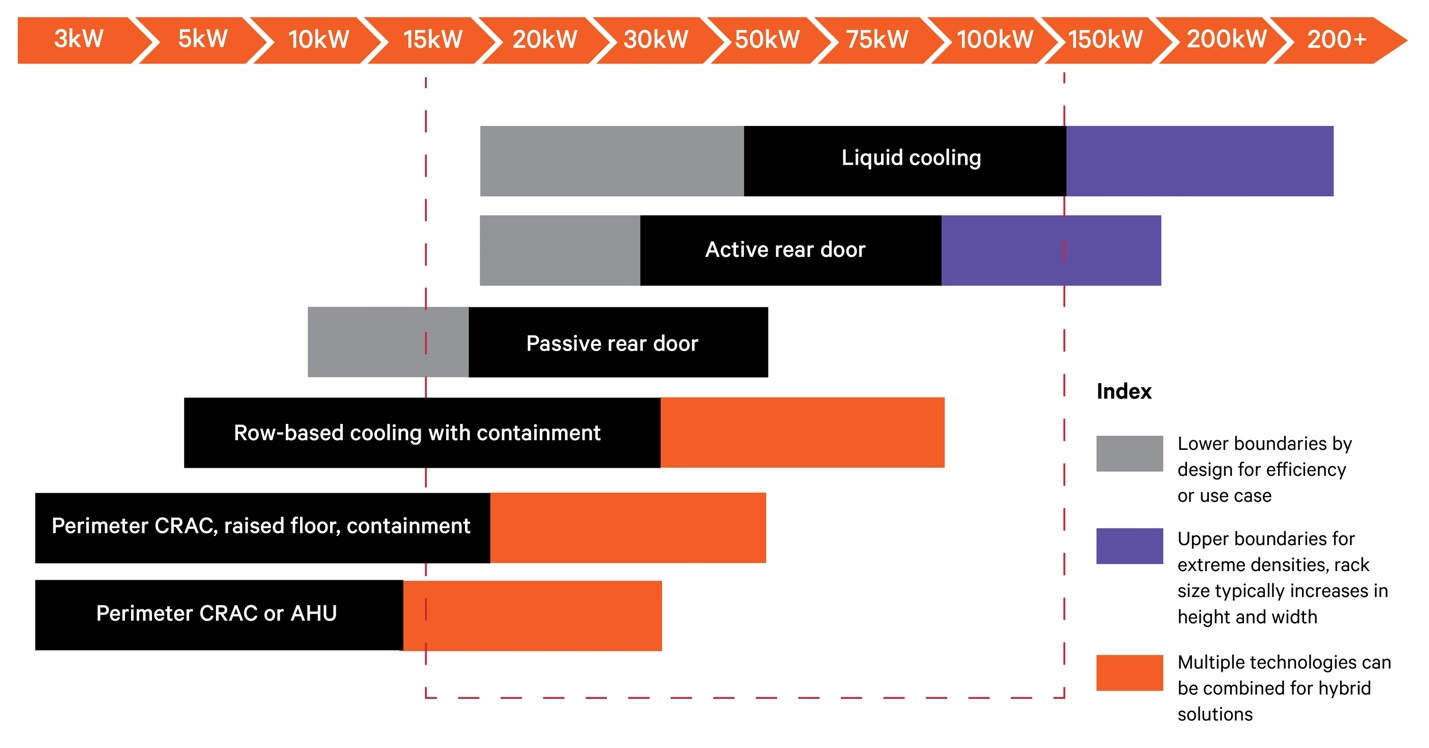

Figure 3: Cooling methods ranked by applicability to modern server rack power levels. Source.

Sam Harrison and Remco van Erp spun out of EPFL — the Swiss Federal Institute of Technology in Lausanne — in 2022 to rearchitect how the brains of our digital age keep cool. Before we get there, a brief primer on chip cooling.

There are many methods of cooling chips. The common method you would find in your home PC or laptop, and some server farms is air cooling. High end gaming PCs may use a liquid cooling loop. Modern top-end server rack cooling systems rely on two primary methods for keeping things from melting – cooling plates or immersion cooling.

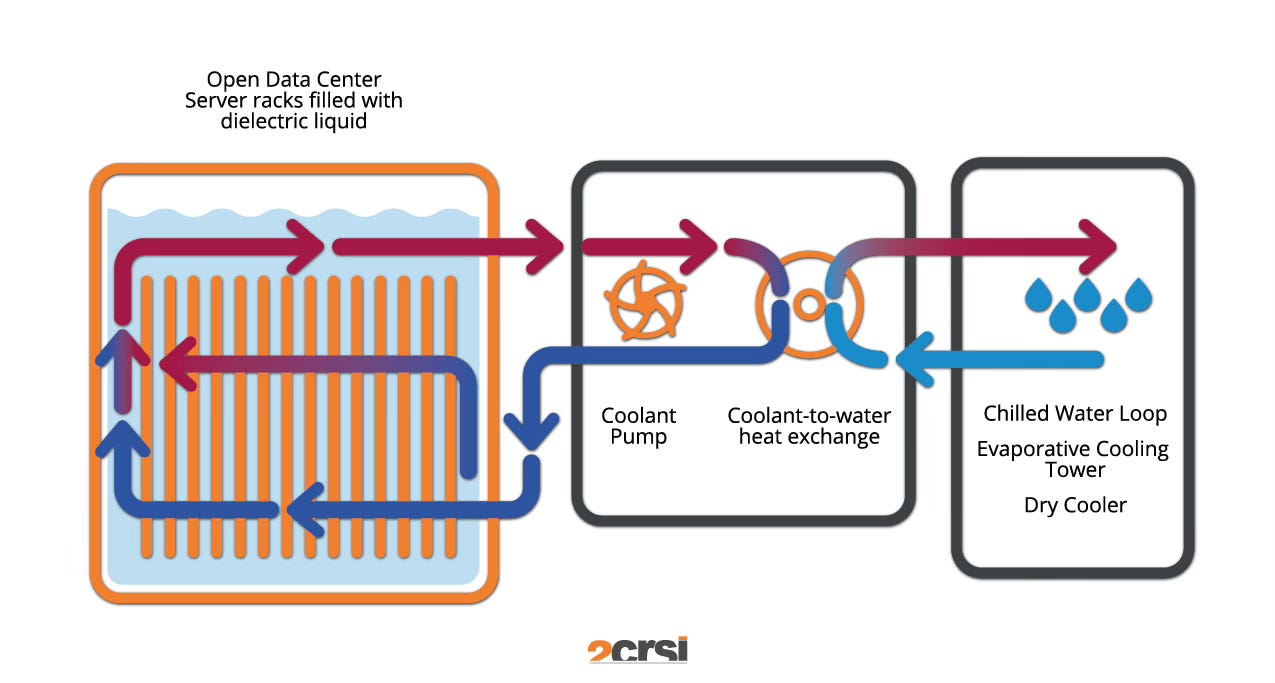

Immersion cooling places the entire computing system in a cooling liquid that is circulated to carry the heat away. This adds significant complexity to a datacenter’s operations and maintenance and significantly increases the capital expenditure per square foot of functional space.

Figure 4: Immersion cooling schematic. Source.

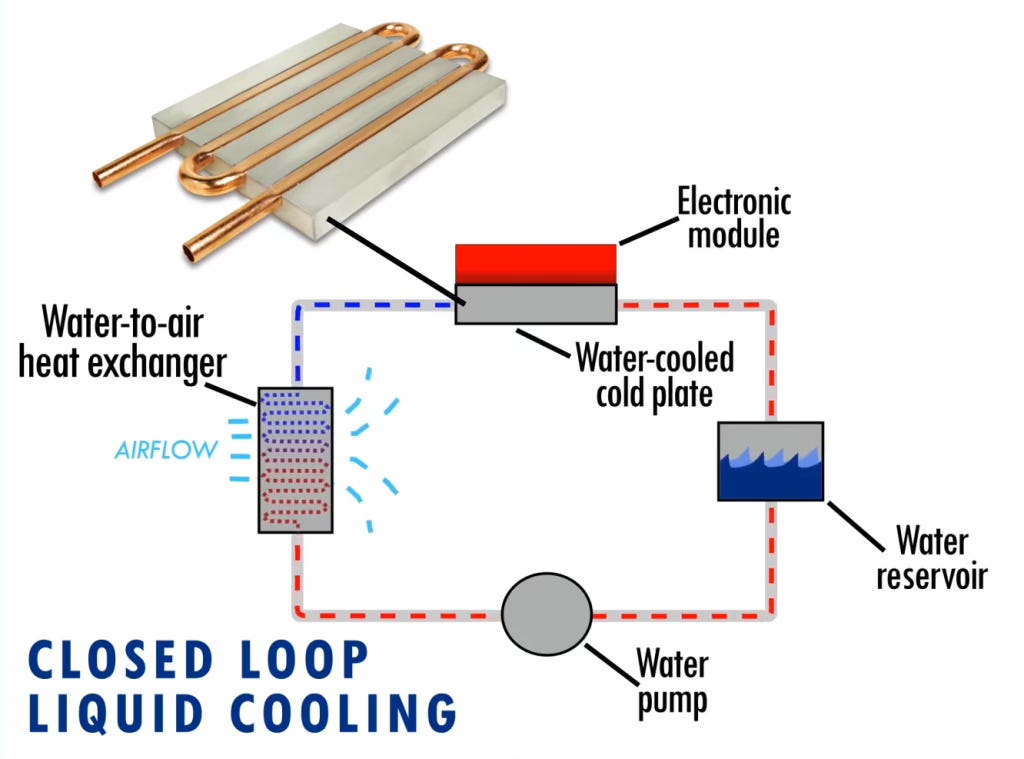

Cold plates are thermally-conductive metal housings closely married to the chips that remove heat directly from the chips and cool themselves by running water through them. These work well for the most part, and are commonplace across the industry today. Downsides are added complexity relative to air cooling, reduced server and chip density, and major risks presented by the possibility of leaks and corrosion to the brazed or welded systems and their mating hardware.

Figure 5: Cold Plate Schematic. Source.

Both systems are a tradeoff - maximize the energy capacity of a single chip at the expense of major integration costs and complexity, more difficult maintenance, lower packaging and overall compute densities, and datacenter design headaches. Corintis is the first technology to break this stalemate.

Figure 6: Sam and Remco holding an early prototype of their cooling plates.

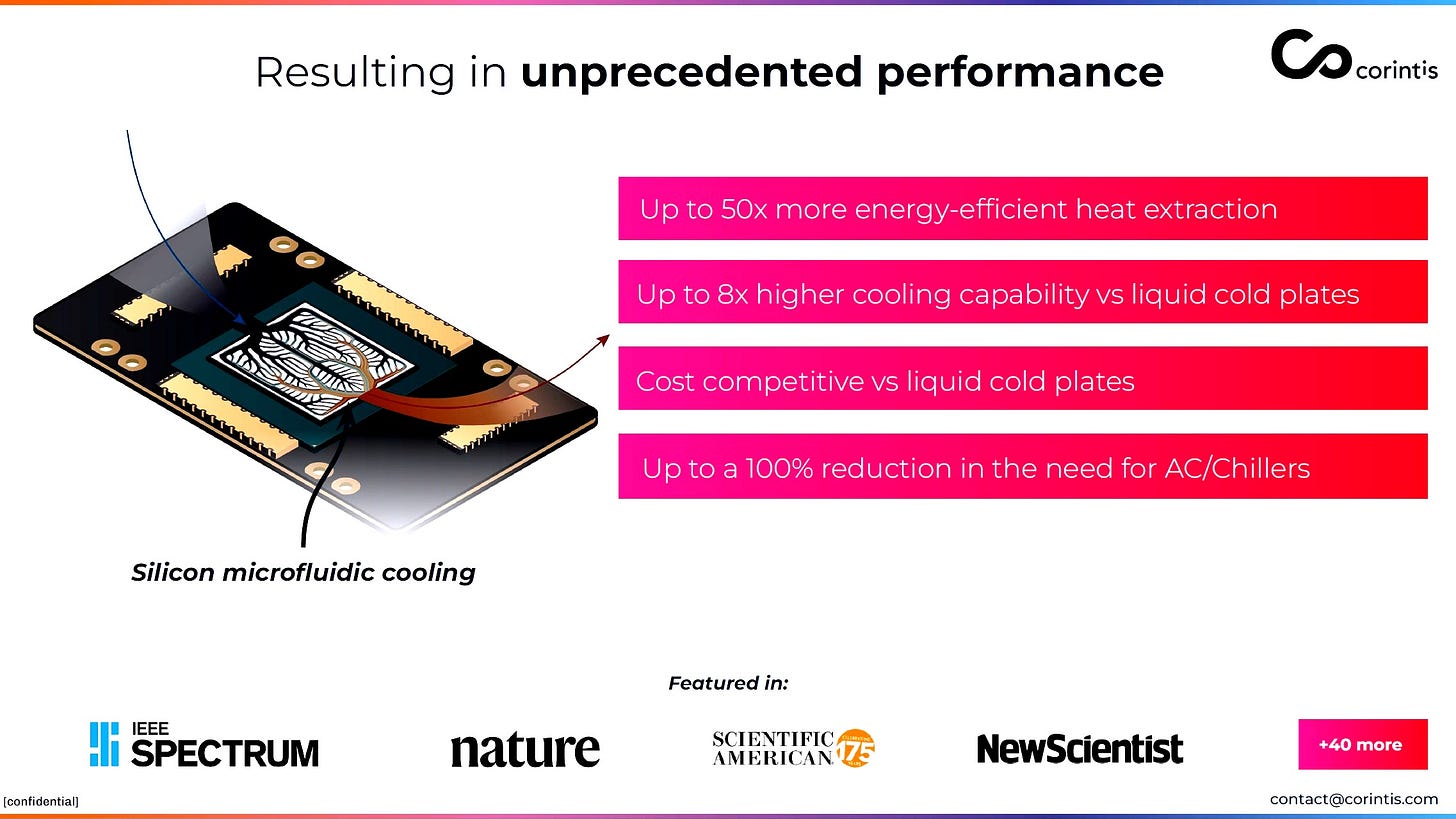

Today, when Corintis designs a cooling solution, they are doing so utilizing the existing infrastructure already inside data centers, displacing legacy cooling plates with upgraded versions of their own design. This has allowed them to deploy quickly and provide real value early in their journey as a company. The results from early tests have been astonishing:

Figure 7: Early benchmarks established by Corintis.

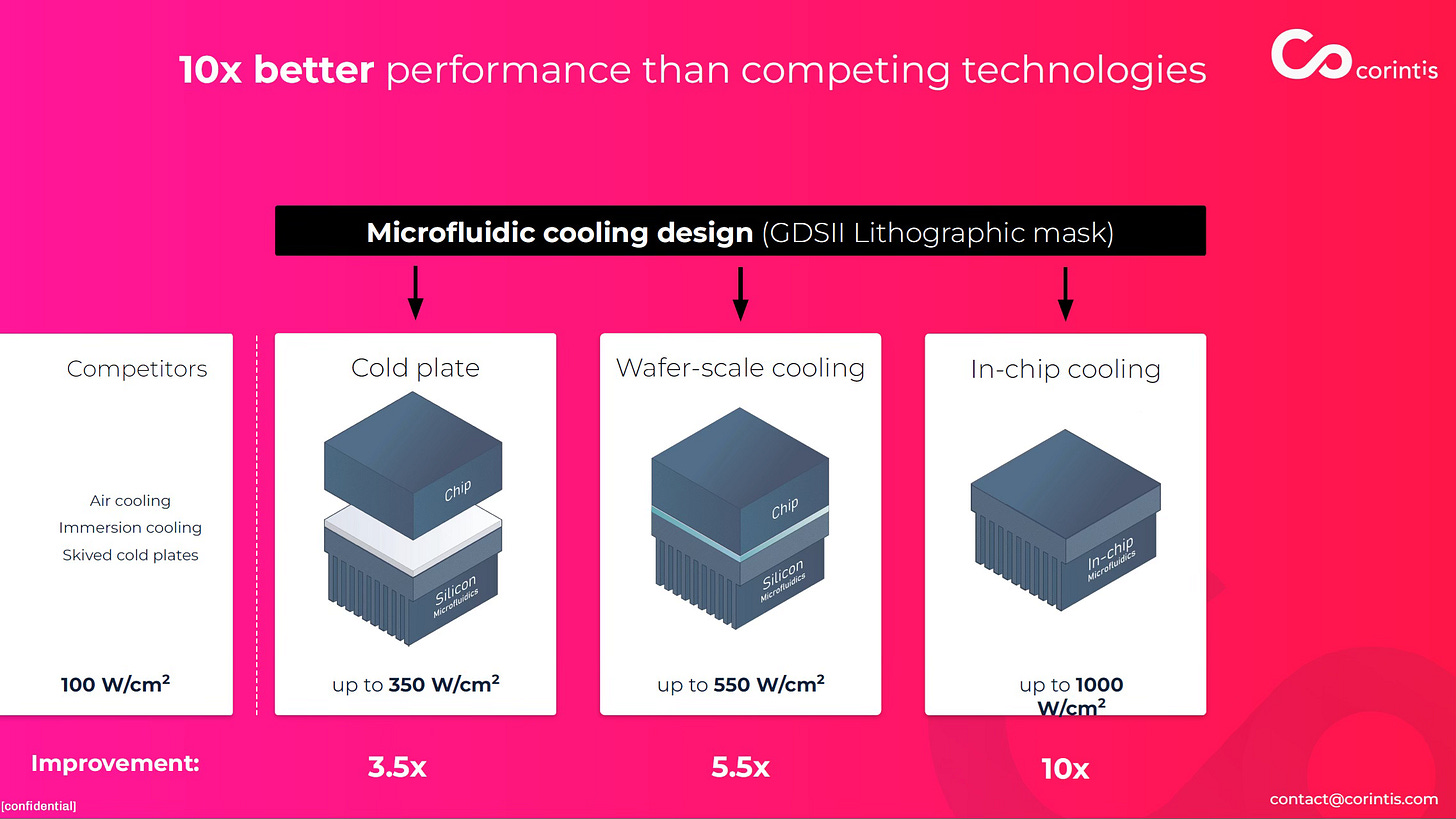

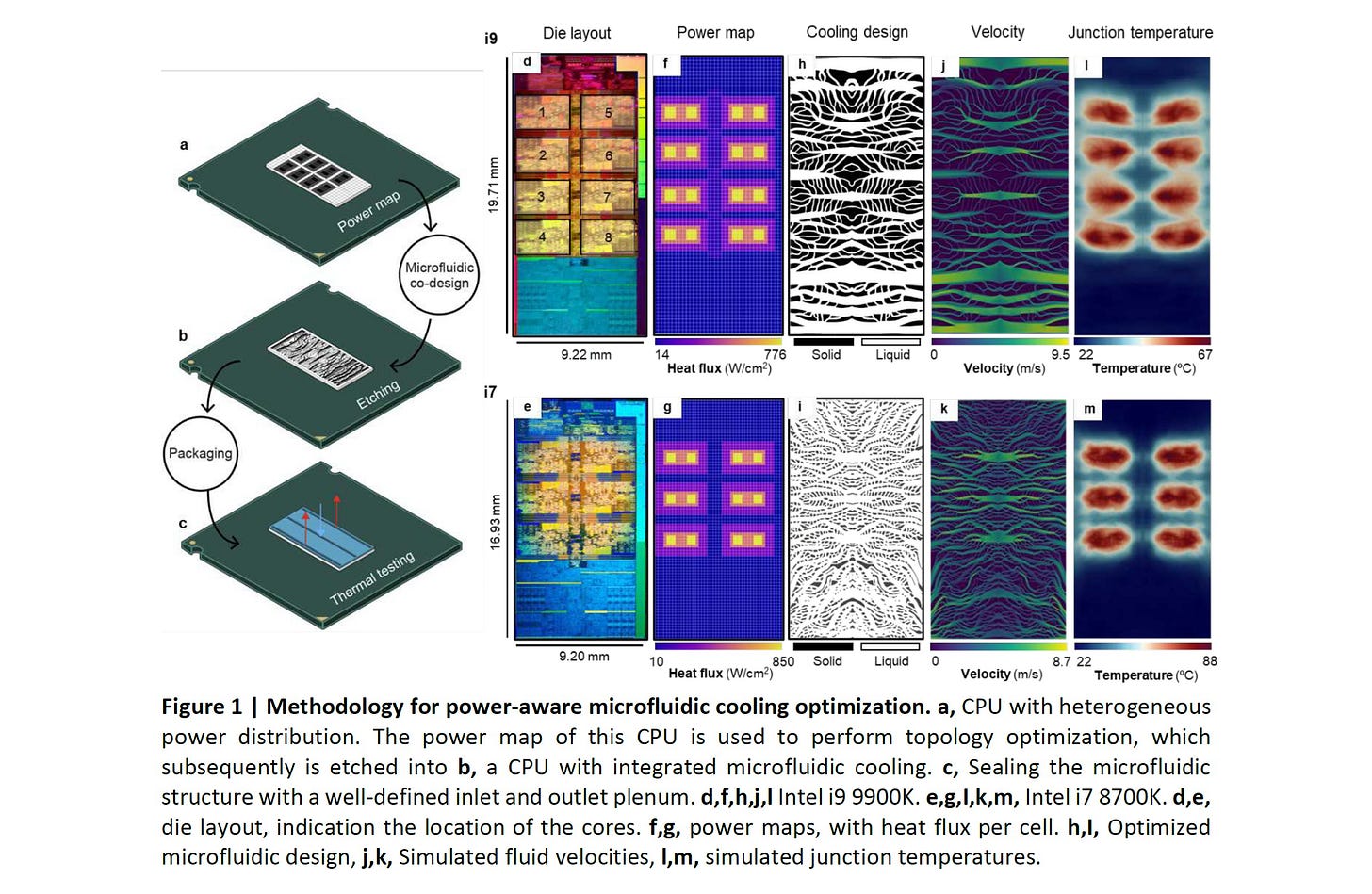

Corintis first maps a chip under intensive load to determine where exactly the heat is being generated and getting stuck. Next, they pattern a cooling architecture unique to that chip based on biological-inspired patterns similar in appearance to circulatory systems, mycelial networks, or even butterfly wings. Finally, they etch that those patterns into microscopic channels that map directly onto the source of heat. Today that’s on a drop-in conductive plate, but soon, the patterns will live on the chips themselves – allowing for water to extract the highest-grade heat at the shortest distance possible. Cooling fluid gets routed precisely where the heat is created and currently getting stuck. This results in up to three times better heat removal than standard cold plates and is up to 50x more energy efficient than standard air cooling. Early results from partner tests have indicated that not only is the cooling much more effective, but the chips themselves can now be run more efficiently at higher power levels. Tradeoff eliminated.

Figure 8: A current prototype cooling pattern. Source.

More than a nice to have feature, this unlocks an entirely different paradigm for everyone in computing. Existing chips can be pushed harder for longer. New chip designs and form factors are possible, higher chip and server rack densities can be built, longer chip life. Better outcomes for everyone.

Figure 9: What Corintis’ technology can unlock.

We first heard about Sam and Remco through Founderful Ventures, a Zurich-based fund that has made a habit of finding Swiss technical talent before the Americans show up with term sheets and Silicon Valley premiums. We invested at seed in 2022. We followed into the Series A and again into the Series A1. Corintis now has seventy-five people, multiple sites, relationships with the industry’s premier companies, and was just named the 2025 Top Swiss Startup.

But here’s the thing that matters more the most, at least right now.

The industry’s next architecture is not cooling-constrained by accident. It’s cooling-constrained by design choices made when the heat problem was manageable and the stakes were lower. You could afford to cram more power through when the transistors were further apart, but that tension now is unavoidable. As transistors get smaller, you can either lower your power to keep things safe, or you have to get the heat out. Stacking chips — the thing that unlocks significantly higher transistor density, lower data travel distances, lower latency, higher bandwidth - has been theoretically possible for years. It has been practically impossible because nobody solved the thermal problem at the chip level. Corintis does.

Microsoft understood this early. They ran a prototype cooling server handling live Teams traffic. It worked. Large quantities of customized cold plates are now shipping to tech companies in the United States. Two new Corintis offices have recently opened – one in Munich, and one in Bellevue, Washington - a fifteen-minute drive from the Microsoft campus that gave them their first serious validation, and home base for a number of the largest potential beneficiaries of their technology in the world.

Figure 10: A snippet of Corintis’ approach as published here.

There’s a pattern we keep seeing when we move outside Silicon Valley. Swiss and European technical teams working on foundational infrastructure problems often appear to US investors, from a distance, like they lack ambition. That because founders haven’t uprooted their lives to attempt a geographic arbitrage, or because they aren’t constantly in front of a camera, they’re not serious. These founders don’t care. They’re not feeding hype cycles. They’re not doing demos at NeurIPS. They’re not on the podcasts, and they’re rarely at the dinners. They’re in the lab helping run experiments, write docs, answer emails. They’re building.

Then suddenly, they have a public breakthrough, and the word gets out.

Acecap has always believed that the access network matters more than the geography. We met Corintis through relationships. We connected them to some of their major customers through our network. We continue to take part in subsequent rounds well above our pro-rata because we were there before the word got out, helping them build. The Swiss don’t hype their infrastructure companies even after they’ve made it, much less before. In our eyes, that’s not a bug, that’s information asymmetry.

The heat problem isn’t going away. If anything, the next generation of AI computing requirements makes it worse, considerably worse, almost comically worse if you do the math on what frontier model training requires at scale. And the companies building the chips, the racks, the power, substations, switch gear, and the cooling systems that make this all possible - the picks and shovels, always and forever the picks and shovels - are where we’ve always wanted to be investing. New computing topologies will be scaled, new math, materials, and manufacturing will appear, and we will be there helping teams build them.

For a deeper technical look at early work please see this paper - https://arxiv.org/pdf/2408.15024

If you want to connect with Corintis or learn more about the future of compute that we continue to invest in, we’d love to chat.